What happened

Seven families of victims from a recent mass shooting in Canada have filed lawsuits against OpenAI, alleging the company’s artificial intelligence tools contributed to the tragedy. The lawsuits claim that the shooter utilized OpenAI’s technologies to plan or facilitate the attack, prompting legal action seeking accountability from the AI developer. The families assert that OpenAI’s products were negligently designed, enabling harmful use with devastating consequences.

Why it matters

This unprecedented legal move raises critical questions about the responsibility of AI companies for how their technology is used, especially when it may enable violent crimes. If courts find OpenAI liable, it could trigger significant changes in AI regulation, forcing developers to adopt stricter safety and monitoring measures. The lawsuits spotlight the broader societal risks posed by rapidly advancing AI and the need for ethical guardrails to prevent misuse.

Background

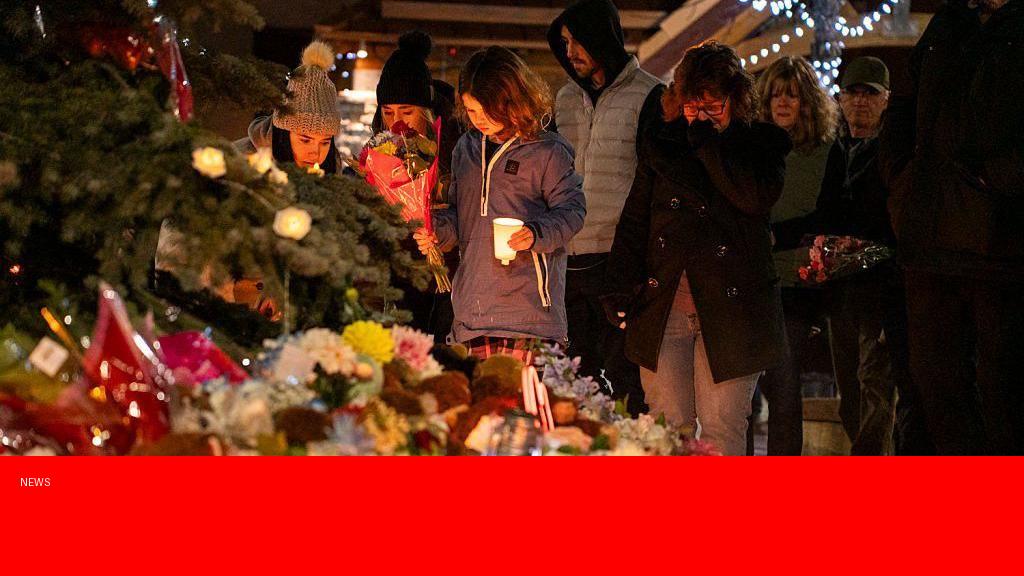

The mass shooting in Canada, which occurred recently, resulted in multiple fatalities and left communities deeply shaken. OpenAI, a prominent AI research and deployment firm, has developed widely used products such as language models and content generation tools. While OpenAI has implemented safety mechanisms to reduce harmful outputs, concerns about potential misuse have persisted. This is among the first instances where families of violent crime victims have sought legal recourse directly against an AI company, marking a pivotal moment in the ongoing debate over AI technology and accountability.

Questions and Answers

Q: On what grounds are the families suing OpenAI?

A: The families argue that OpenAI’s AI tools were negligently designed and insufficiently safeguarded, enabling the shooter to access or use them in planning the attack, thus making OpenAI partially responsible for the harm caused.

Q: Has OpenAI responded to the lawsuits?

A: At this time, OpenAI has not issued a detailed public statement regarding the lawsuits but has emphasized its commitment to safety and ethical AI development in past communications.

Q: Could this case affect future AI regulations?

A: Yes, the outcome could influence lawmakers and regulators to impose stricter requirements on AI companies to prevent misuse of their technologies and ensure greater accountability.

Q: Are there precedents for suing tech companies over violent acts?

A: While tech firms have faced lawsuits for content moderation failures or data breaches, holding an AI developer directly liable for violent crimes is a relatively new and legally complex area.

Source: https://www.bbc.com/news/articles/c99l03k0ly4o?at_medium=RSS&at_campaign=rss